[Role]

Interaction Designer, Experience Prototyper (Solo)

[Duration]

7 Weeks, 2025

[Tech Stack]

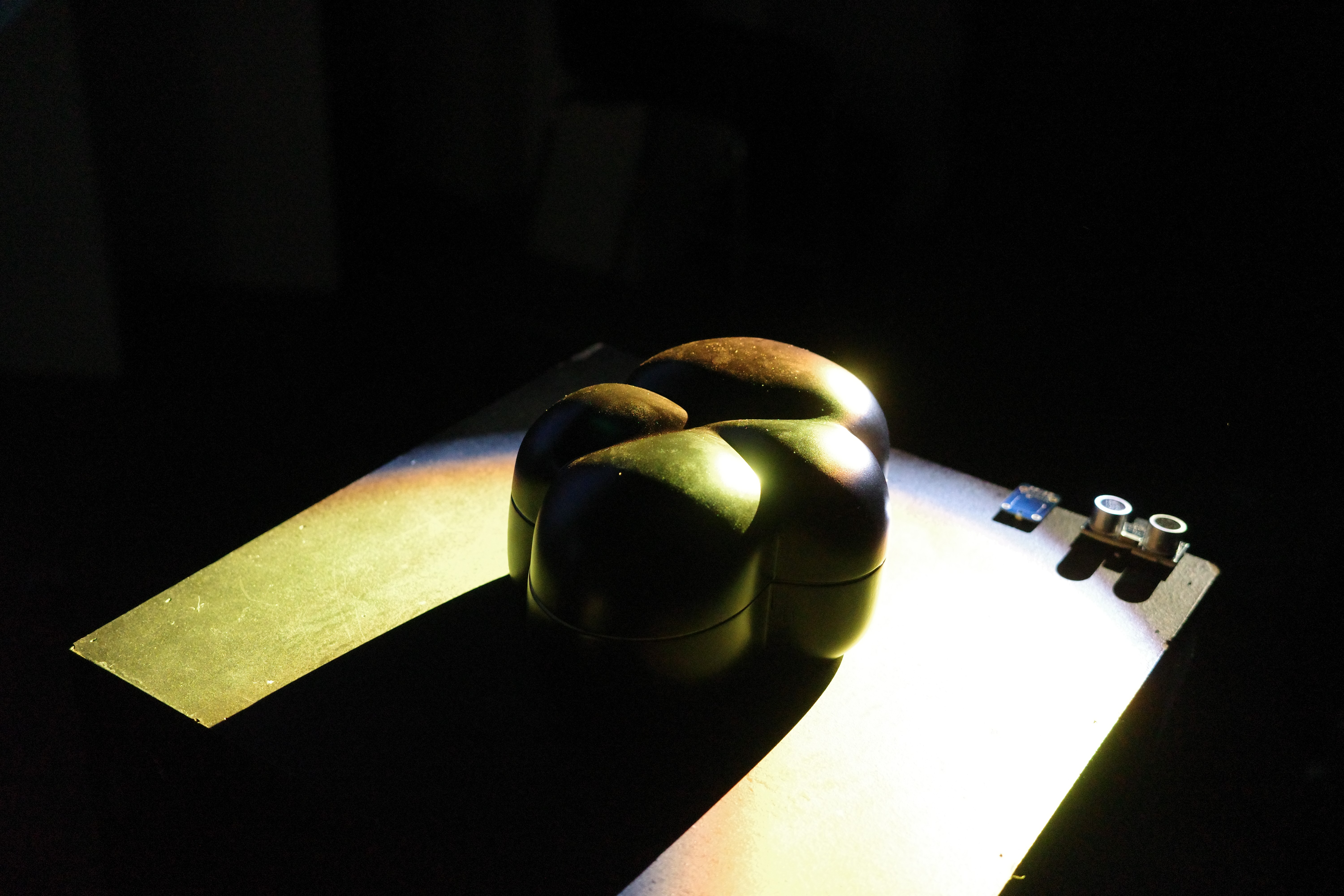

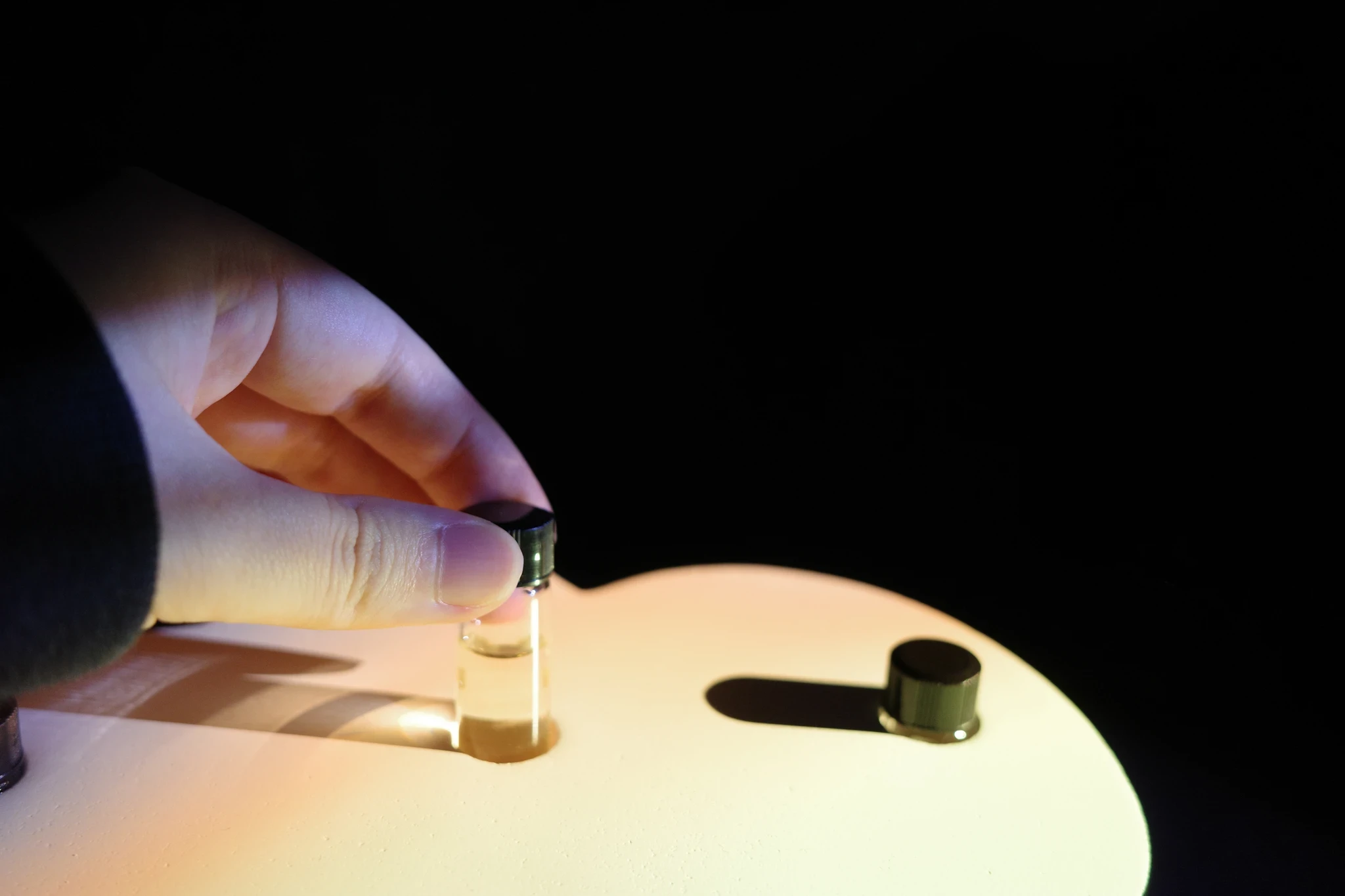

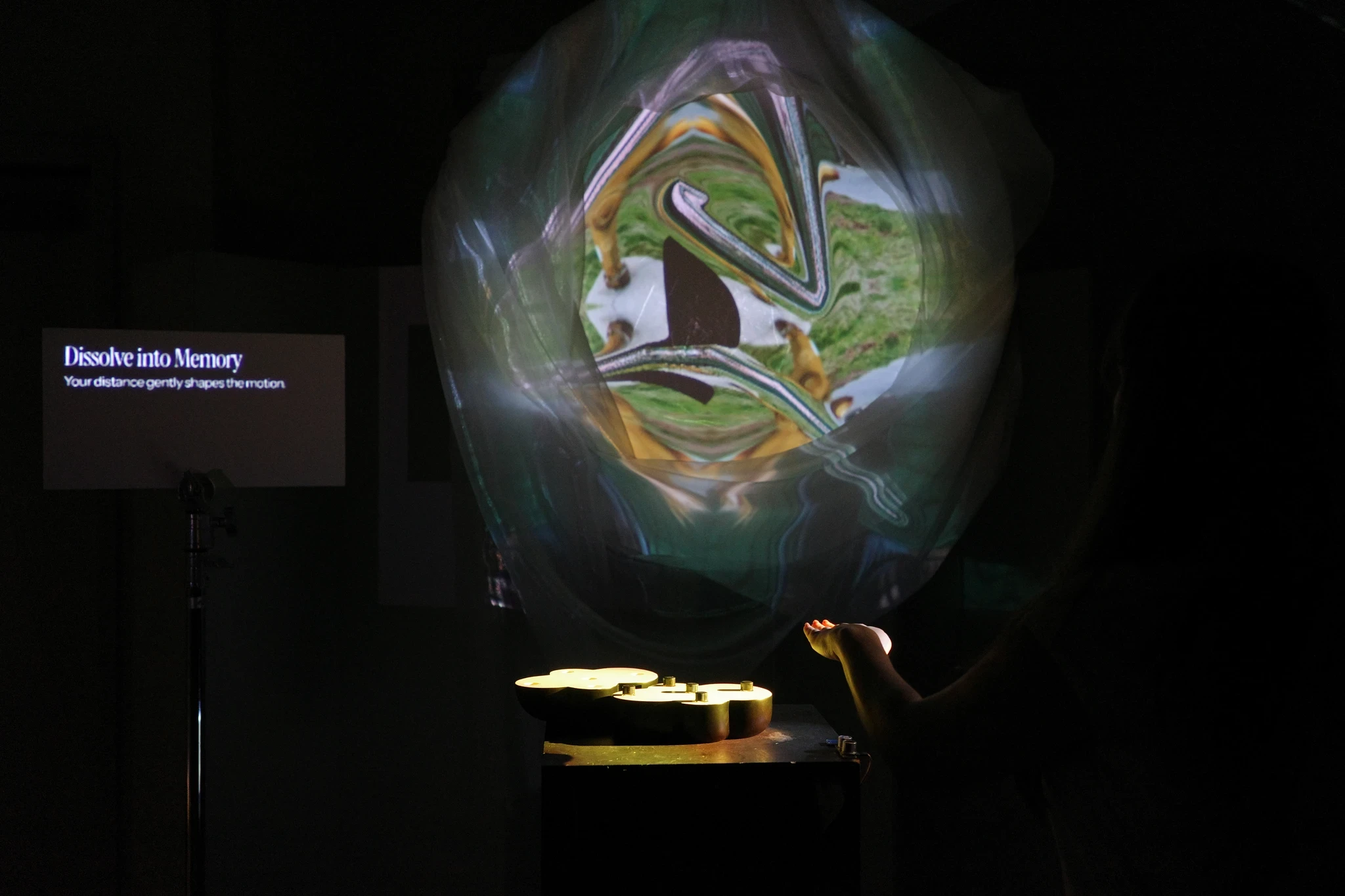

Arduino Uno, TouchDesigner, Ultrasonic & Capacitive Sensors

Spatial Computing

Multimodal UI

Hardware Prototyping

The Challenge & Logic

Engineering Intentionality Beyond the Screen

Digital interactions are increasingly confined to flat screens, stripping away the richness of human senses. My primary challenge was building an interface that responds directly to physical presence rather than traditional clicks.

To bridge the gap between raw sensor noise and seamless UX, I implemented a 'Hysteresis Loop' within the system’s logic. By programming a specific data threshold, it distinguishes casual passersby from intentional users. This prevents visual flickering, ensuring transitions feel stable, deliberate, and human-centered.

All Works